Welcome!

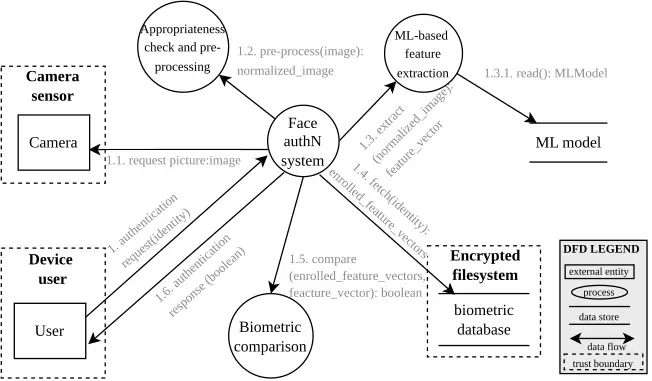

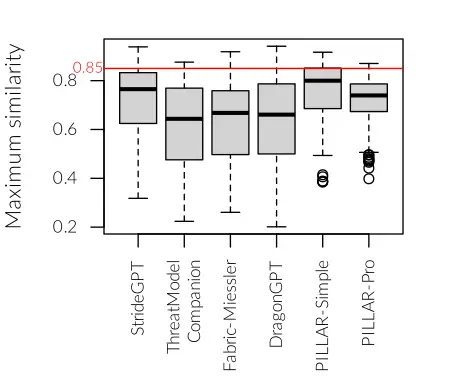

Welcome to this month’s edition of Threat Modeling Insider! In this edition, Dimitri Van Landuyt shares his view on usign LLM tools for complete threat models.

Next, on the Toreon Blog, Georges Bolssens shares his take on “Managing unknowns assumptions in Threat Modeling”. Very interesting read on hidden risks.

There’s plenty of other actionable insight ahead, so settle in and let’s get started!