The threat actors targeting a Retrieval-Augmented Generation (RAG) system can vary depending on the system’s goals, exposure, and users. If a RAG system is open or exposed to the internet, it could be targeted by criminals or financially motivated threat actors, especially if the system facilitates activities they seek. Information extraction for reconnaissance in the early stages of an attack or espionage efforts can also motivate such actors. However, one of the primary threat actors for these systems is often the insider threat. Accidental or deliberate information sharing and system exploitation by malicious insiders can lead to serious security risks, whether as part of post-exploitation activity or attempts to compromise corporate information for personal gain (Huang et al., 2024).

The primary threat category associated with RAG systems is Information Disclosure, given the vast amount of sensitive data these systems may access. Additionally, a lack of awareness of how these systems function could expose organizations to compliance risks, especially if RAG systems handle Personally Identifiable Information (PII) or Payment Information.

Despite their sophisticated design, RAG systems are susceptible to various threats, many stemming from their reliance on external data and generative capabilities. Vulnerabilities in these systems can exacerbate existing problems in corporate environments, such as poor access control or inadequate data governance and classification.

For this threat model, the STRIDE mnemonic was used to identify potential threats through a component-based approach. While this list is not exhaustive, it covers key threats relevant to a generic context. However, the specific threats may vary based on the project, company, or industry. No threat scoring is provided here, as risk levels depend on the unique characteristics of each organization or system.

To mitigate the threats associated with RAG systems, a combination of best practices, regular security audits, and cutting-edge defense mechanisms should be implemented.

- Implement strong access controls on source data: Granting appropriate access to corporate knowledge retrieved by the RAG solution is crucial to avoid perpetuating existing security issues. While it may seem trivial, many companies still struggle to adhere to the least privilege principle, especially in large, dynamic corporate environments. Since the responses generated by the LLM often depend on the current permissions of the user querying the information, permissions should be tailored based on data sensitivity, ensuring that corporate knowledge is accessed and used only by those with explicit authorization. Regular audits and reviews of both permissions and the outputs of the RAG system should be conducted to prevent privilege escalation and unauthorized access.(Threats 1, 3, 4)

- Apply data masking and classification to prevent sensitive data exposure in documents sent to LLMs: Masking or redacting sensitive information, such as personal data or corporate secrets, before processing it through the LLM minimizes the risk of data breaches. Automated data classification tools and redaction techniques should be employed to prevent unauthorized data exposure. (Threats 1, 2, 7)

- Secure API integrations: This may seem like an obvious recommendation, but it cannot be emphasized enough, as basic security issues are still commonly found in APIs. Enforce strict API security measures such as authentication, rate limiting, and end-to-end encryption to minimize the risk of exposing sensitive information. Regularly review token scopes and access controls in APIs, and avoid using overscoped tokens that may grant access to sensitive data to users who do not have the need to know. Strong API hardening and proper token management are essential to reducing the risk of information disclosure. (Threat 4)

- Deploy monitoring and alert systems for data source integrity: Continuous monitoring of data sources for tampering, unauthorized changes, or unusual activity is crucial for detecting source manipulation. While this should be part of the existing defense and detection systems, consider scenarios—depending on the RAG system architecture—where additional integrity validations are required directly within the RAG system for critical sources. (Threat 2)

- Implement Adequate System Resilience: Usual measures such as rate limiting and throttling to control excessive requests, alongside load balancing and caching, can reduce strain on external sources. Optimizing query preprocessing and similarity search algorithms helps minimize unnecessary resource usage, while anomaly detection for suspicious query patterns prevents abuse. Additionally, setting resource quotas and utilizing failover mechanisms ensures overall system resilience. (Threat 6)

- Output and sources validation and verification: To mitigate the threat of hallucinations in RAG systems, especially in high-stakes fields such as healthcare, finance, and legal services, it is essential to implement rigorous validation and verification processes for the retrieved data. This involves cross-referencing outputs against trusted sources to ensure accuracy and reliability. Additionally, incorporating feedback loops that allow users to flag inaccuracies can help refine the system’s understanding over time. Leveraging human-in-the-loop approaches, where domain experts review critical outputs, can further enhance the quality of information generated. Regularly updating the knowledge base and employing advanced error-checking algorithms will also minimize the chances of ambiguity and incompleteness in the retrieved data, thereby reducing the potential for hallucinations.(Threat 5)

- Prompt filtering and validation mechanisms to detect and block injection attempts: Implement input validation and prompt sanitization to prevent prompt injection attacks. Predefined templates or rules can help ensure only safe inputs are processed by the LLM, reducing the risk of information disclosure and tampering. Additionally, incorporating contextual understanding can improve security by detecting anomalous inputs based on prior query patterns, and real-time monitoring with alert systems can flag suspicious input behavior for further investigation, enhancing the overall protection against prompt injection threats. (Threats 7, 1)

- Enable detailed logging and audit trails for source access actions: Maintain detailed logs of who accessed data, when, and what actions were taken. Granular audit trails help in forensic analysis and traceability, especially in regulated industries. Securely store logs for periodic review to detect anomalies or suspicious activities. To further enhance security, consider log anonymization to protect user privacy while maintaining traceability, and implement automated alerts to flag abnormal or unauthorized access patterns, improving the responsiveness and effectiveness of your logging system.(Threat 8)

The threat model presented here provides a foundation for identifying threats and recommending mitigation strategies for RAG AI systems. However, like any threat model, it has some inherent limitations that should be acknowledged:

- Context-Specific Customization: This model is intentionally generalized. Each RAG system may face unique threats based on its specific context, such as industry regulations, user base, and operational environment that are key to determine the relevant threats and their prioritization.

- Corporate Environment Approach: This threat model was produced from a corporate perspective, focusing on a RAG solution that accesses company information to retrieve or answer user queries. This approach may have left out other threats relevant to different applications, environments, or use cases.

- Evolving Attack Vectors: Cyber threats evolve rapidly, and new attack techniques may emerge that exploit weaknesses in generative AI and retrieval mechanisms. Continuous threat modeling is essential to keep pace with these developments and ensure the system remains secure.

- Technical Complexity: RAG systems consist of numerous interconnected components. Comprehensive security requires protecting not only the retrieval and generation modules but also the underlying infrastructure, databases, web interfaces, and APIs. Achieving this level of protection requires coordinated efforts across teams, including security, engineering, and data governance.

- Human Factor: Many threats, such as social engineering attacks or insider threats, stem from human error or malicious intent. Even the most technically sound systems can be compromised if users or administrators do not follow proper security protocols.

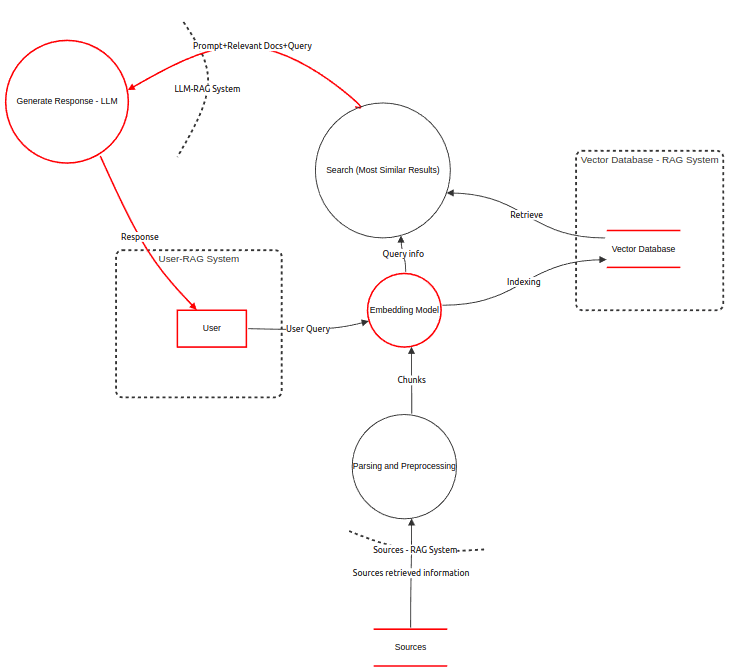

- RAG systems combine external data retrieval with generative models, enabling more relevant and fact-based responses.

- Key threats include unauthorized access, data leakage, denial of service, source tampering, and prompt injection.

- Mitigation strategies focus on strong access controls, detailed logging, input validation, rate limiting, and regular audits.

- The threat model serves as a source that could be used along with others to help identify your own project threats.

In summary, securing RAG systems requires a proactive, multi-layered approach, integrating technical safeguards with organizational best practices.

Dichone, P. (2024, August 1). Learn RAG Fundamentals and Advanced Techniques. freeCodeCamp. Retrieved September 24, 2024, from https://www.freecodecamp.org/news/learn-rag-fundamentals-and-advanced-techniques/

Huang, K. (2023, November 22). Mitigating Security Risks in RAG LLM Applications | CSA. Cloud Security Alliance. Retrieved September 26, 2024, from https://cloudsecurityalliance.org/blog/2023/11/22/mitigating-security-risks-in-retrieval-augmented-generation-rag-llm-applications

Huang, K., Wang, Y., Goertzel, B., Li, Y., Wright, S., & Ponnapalli, J. (Eds.). (2024). Generative AI Security: Theories and Practices. Springer Nature Switzerland, Imprint: Springer.

Shostack, A. (2014). Threat Modeling: Designing for Security. Wiley.

Zou, W., Geng, R., Wang, B., & Jia, J. (n.d.). PoisonedRAG: Knowledge Corruption Attacks to Retrieval-Augmented Generation of Large Language Models. Cryptography and Security. https://arxiv.org/abs/2402.07867