Guest article

Elevation of MLsec: bringing threat modeling to machine learning practitioners

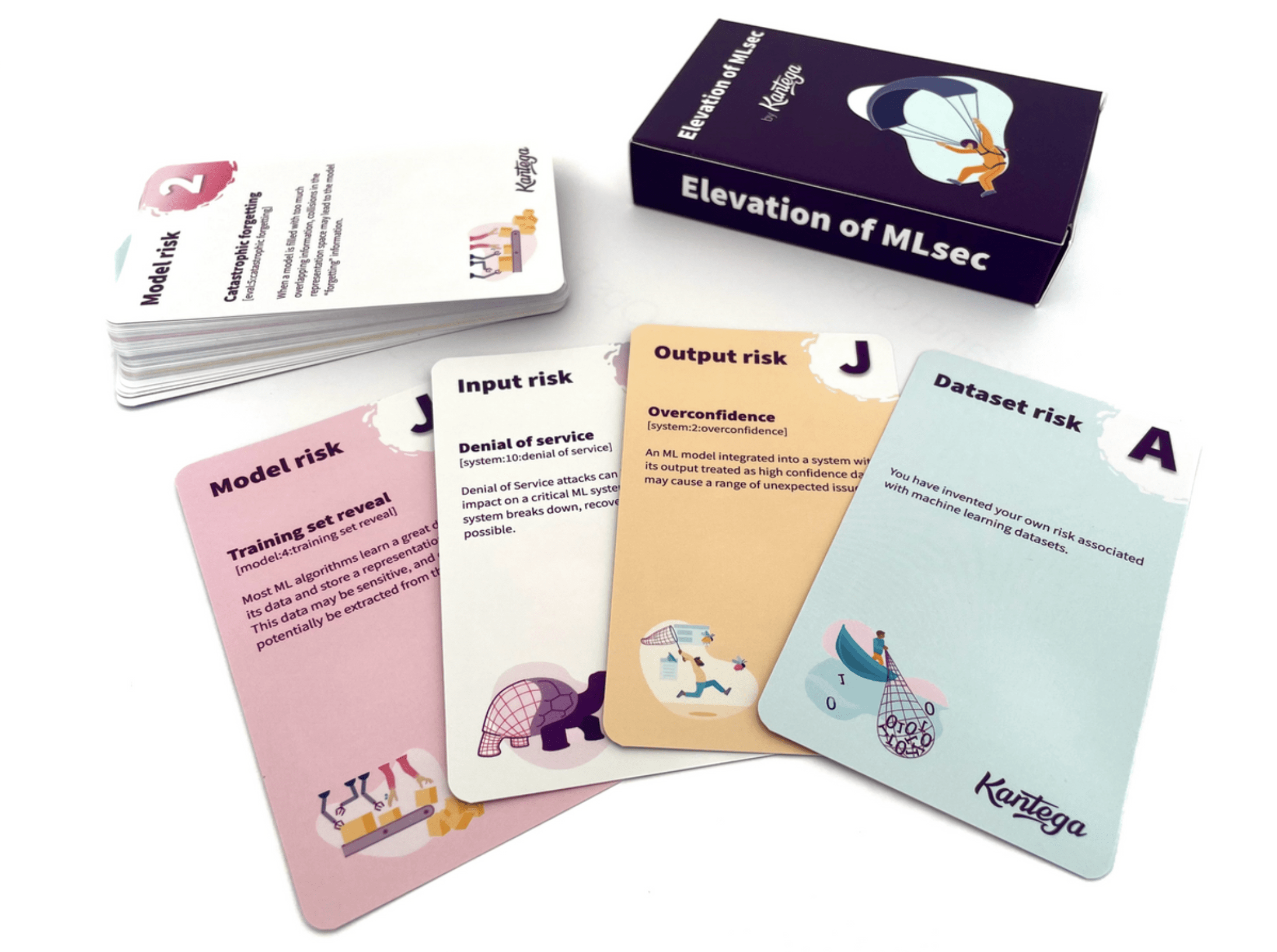

In 2024, I created the threat modeling card game Elevation of MLsec (EoML), which is a machine learning security extension of Adam Shostack’s well-known game Elevation of Privilege (EoP). Simply put, the cards in the game describe things that can go wrong during the whole lifecycle from machine learning (ML) training and engineering, to operations where models interact with the world.

I believe the game is highly relevant during a period where adoption of machine learning technology like large language models (LLMs) has taken off. Elevation of MLsec follows the same setup and rules as Elevation of Privilege, and can be combined with the original deck if you threat model holistic view of a system with multiple components.

In this article, I will expand upon a bit of the game’s backstory and technical background, as well as my current experiences with applying the game and sharing it with practitioners.

The magic of gamification

During the autumn of 2022, I watched a lecture from Gary McGraw about his recent work in security engineering for machine learning. This lecture inspired in me a deep interest for machine learning viewed from a security and safety perspective. The work by Gary and his colleagues has been published through the Berryville Institute of Machine Learning (BIML), whose website has their reports available under the creative commons.

Then later in 2023, I got familiar with Elevation of Privilege through a presentation from a colleague who had used the game to teach threat modeling to his team. While I was already familiar with STRIDE, this was the first time EoP crossed my path. The presentation of this game gave me an idea; why not take the report from BIML and put it into a game like EoP? I worked on an initial draft of the game where I took some of the notable risks from BIML and got help designing a card surface. During this period, BIML published their 2024 report that targeted risks within LLMs. This influenced the game design, which aside from generic ML risks got a few cards that are specific to LLMs. As a nod to the OWASP crew, I also included a couple items from the OWASP top 10 list for LLMs. After several trials of playtesting, I officially announced the game roughly one year ago, in June of 2024. Since then I have been running several more play tests and workshops, and I am still actively collecting feedback from players.

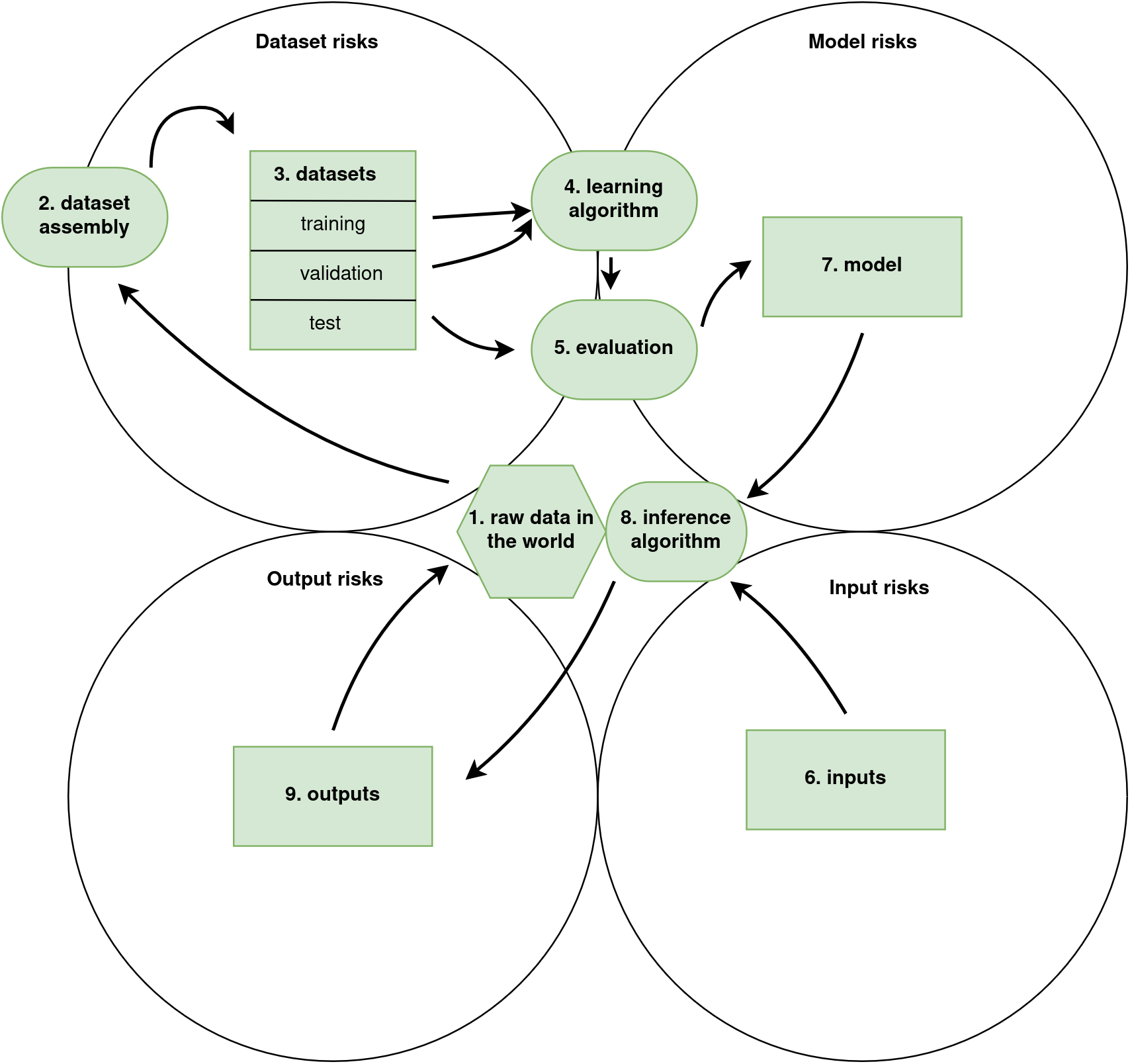

I like the BIML risk framework [2] because they offer a rigorous and holistic view of the ML lifecycle without hand-waving of fluffy terminology. Rather than talking about AI and using anthropocentric terminology, there is a focus on technical details as well as a critical view on the bigger picture. The game Elevation of MLsec applies the risk framework of BIML, who use nine components to describe a generic machine learning lifecycle. An important emphasis is put on the details of the training process, where seemingly harmless decisions can have subtle but dangerous consequences for security or other quality aspects of the system.

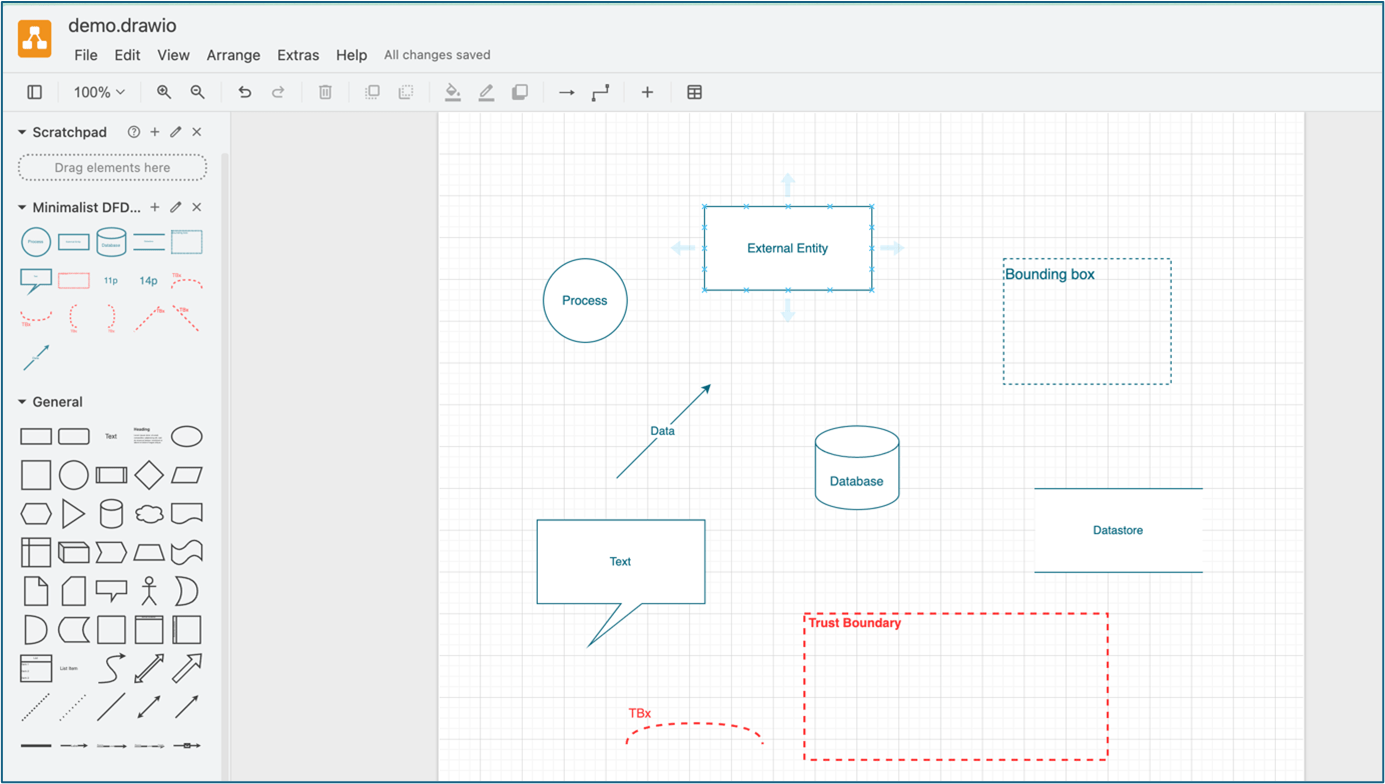

For the game, I used four of the components to get my four card suits. The lifecycle with respect to the card suits is shown in the figure below.

The cards suits come from the four objects: 3. datasets, 6. inputs, 7. model, and 9. outputs. The the ovals in components 1, 2, 4, 5 and 8 (and the polygon in component 1) are processes or data pools that form interfaces between the objects. Therefore their risks can be considered risks of the object with which they interface. Risks about the system as a whole can also be isolated to one component in our context as it usually is most present in one of the components.

Considering this lifecycle, let’s have a look a few risks to illustrate the range of things that can go wrong in machine learning (ML):

- Data poisoning: Data is integral for the security of an ML system. That’s because an ML system learns to do what it does directly from data, and essentially becomes the data. If an attacker can intentionally manipulate the data being used by an ML system, the entire system can be compromised. Particularly with natural languagem, it is very hard to distinguish poison from “true” data.

- Transfer learning attack: Model transfer leads to the possibility that what is being reused may be a Trojaned (or otherwise damaged) version of the model.

- Transparency: It is easier to perform attacks undetected on a black-box system which is not transparent about how it works.

- Error propagation: When ML output is input to a larger decision process, errors in the ML subsystem may propagate in unforeseen ways.

- Input ambiguity: English, the main interface language for LLMs, is an ambiguous interface. Natural language can be misleading, making LLMs susceptible to misinformation.

These risks range from specific attacks from motivated threat actors, to subtle mistakes in engineering decisions made by the builder of an ML system. Both may have some negative consequence for the system that it might be worthwhile to consider. The risks in BIML are fundamental in nature, and address building blocks that are underlying most deep learning models. The ML lifecycle they published is a great way to organize our thinking about the full process of ML. Because of its generality, the BIML framework can very well be narrowed down and used for a particular machine learning system. For example, BIML took their 2020 work about generic ML[2] and applied it to generic LLMs [3].

Other ML security framework

BIML´s particular emphasis on the engineering part of machine learning contrasts MITRE ATLAS, which is a framework specifically listing various kinds of attacks. The OWASP top ten lists for machine learning and LLMs have a ,ore overlap with BIML. While the OWASP lists aren’t as extensive as BIML given their limitation of ten items, they are regularly maintained to incorporate new developments in the field.

Play testing

The last year has been spent sharing the game, and testing it with various participants, both university students as well as professionals in the industry. I have been running workshops where cross-functional teams with anything from management to tech have been working together to threat model a fictive system. The play tests suggest that the game is a promising way to start discussions about what can go wrong while building or using machine learning technology. The players reported that they were surprised at how engaging it was. Players with a non-technical background paired with technicals were able to threat model a system, and were able to get into the mindset of a security person.

Using it in practice

This game is one of many available tools that can enrich a product team’s threat modeling process. I think this game can be a good starting point for AI teams that would like to get starter with threat modeling. Playing will hopefully yield a nice and broad overview of what can go wrong in your system, and get you started thinking about AI more the way the people at BIML do. Because of its compatibility with Elevation of Privilege, I also think Elevation of MLsec is a good choice for teams that are already experienced with threat modeling, but need somewhere to start with all this new AI stuff. With the BIML lifecycle as a foundation for thinking about machine learning security, I think you will have a better time absorbing other frameworks, organizing their contents into the components of the generic ML lifecycle.

Intended audience

I have reflected on where such a game might be useful. In my opinion, it is very powerful to tackle design discussions in a cross-functional team with representation from various disciplines outside pure technological roles. Still, the following roles may find use for these cards as a way to get started with security engineering in an organization that is employing machine learning in some way:

- Security practitioners can use this game to perform relevant threat modeling coaching with ML engineering teams or teams otherwise adopting AI.

- ML practitioners and engineers may use this deck as part of their thread modeling process.

- Software professionals integrating their “traditional software system” with an ML component can use these cards to get familiar with potential risks that come from integrating the ML component.

After using the game, it would be natural to explore the richer details of the BIML frameworks for ML in general [2], and for LLMs in particular [3].

The way forward

Moving forward, I am excited to see whether I can bring the game all the way into the heart of data science projects to get the developers started with threat modeling so they can reduce risk while building great things! I am also currently working on a second edition of the game that covers more ground, embeds some more modern developments, and fixes mistakes in the 1.0 version. I warmly welcome any feedback from people who have tried playing the game.

References

- Kowalski, S. J., Von Seth, E., & Zoto, E. (2023). Cs technopoly: A megagame for teaching and learning cybersecurity. Accelerating Open Access Science in Human Factors Engineering and Human-Centered Computing.

- McGraw, G., Figueroa, H., Shepardson, V., & Bonett, R. (2020). An architectural risk analysis of machine learning systems: Toward more secure machine learning. Berryville Institute of Machine Learning, Clarke County, VA.

- McGraw, G., Figueroa, H., McMahon, K., & Bonett, R. (2024). An architectural risk analysis of large language models: Applied machine learning security. Berryville Inst. Mach. Learn.(BIML), Berryville, VA, USA, Tech. Rep.

- Shostack, A. (2014). Elevation of privilege: Drawing developers into threat modeling. In 2014 USENIX Summit on Gaming, Games, and Gamification in Security Education (3GSE 14).